Neural Prism 3157080190 Apex Node

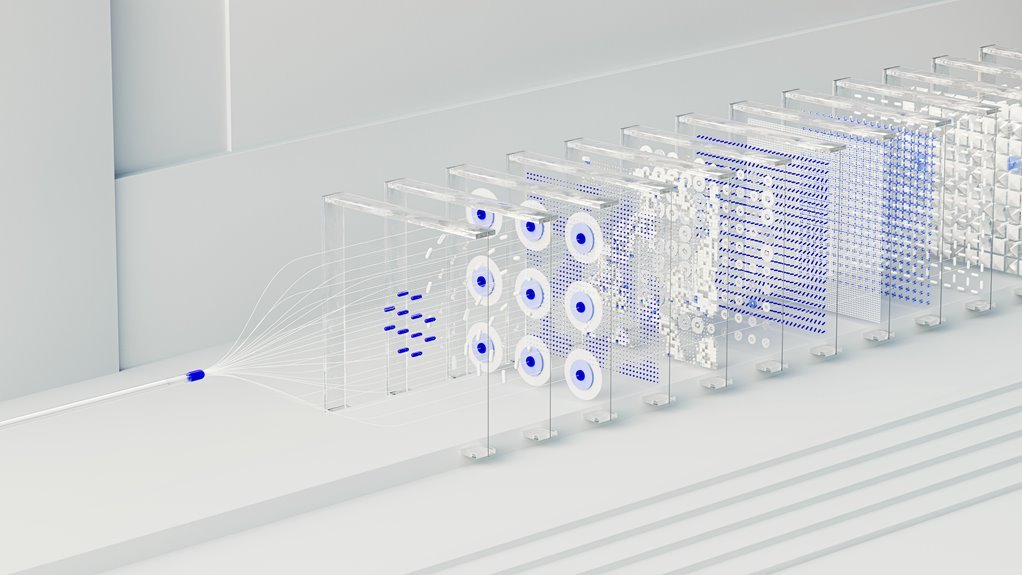

The Neural Prism 3157080190 Apex Node represents a modular accelerator that fuses neural processing with high-bandwidth routing to optimize tensor dispatch and policy-driven dataflow. Its architecture emphasizes determinism, scalability, and energy efficiency, enabling data locality across heterogeneous cores and reducing memory stalls. By aligning interconnects with precision schedulers, it accelerates matrix-stage pipelines and supports flexible deployment. The result is reproducible, QoS-governed performance across varied workloads, inviting scrutiny of integration trade-offs and deployment models.

What Is the Neural Prism 3157080190 Apex Node?

The Neural Prism 3157080190 Apex Node is a specialized component designed to integrate neural processing with high-bandwidth data routing within advanced AI architectures. It functions as a modular accelerator, streamlining tensor dispatch and policy-driven dataflow. The design emphasizes determinism and scalability, addressing unrelated topic, off topic concerns while preserving architectural neutrality and enabling freedom-oriented experimentation within constrained, measurable performance envelopes.

How the Architecture Speeds Up Deep Learning Workloads

How does the architecture accelerate deep learning workloads? The neural prism enables data locality and bandwidth optimization across heterogeneous cores, reducing memory stalls.

Apex node architecture speeds pipeline stages, enabling parallelism and lower latency for matrix operations.

Specialized interconnects and precision-tuned schedulers align compute with memory, boosting throughput for deep learning workloads while preserving energy efficiency and deterministic behavior.

Real-World Use Cases and Performance Benchmarks

Real-world deployments demonstrate how the Neural Prism Apex Node translates theoretical throughput gains into tangible performance across heterogeneous workloads.

Benchmark suites reveal consistent throughput scaling for convolutional, transformer, and graph workloads, with stable latency under load.

The neural prism design enables modular deployment, predictable resource contention handling, and reproducible results across accelerators, fabrics, and memory hierarchies, validating the apex node as a versatile accelerator platform.

Integration Considerations: Latency, Cost, and Scalability

Given the integration challenges of the Neural Prism Apex Node, latency, cost, and scalability must be evaluated as interconnected constraints rather than isolated metrics; this entails assessing end-to-end latency across heterogeneous workloads, cost-per-performance under varying fabric configurations, and horizontal scaling impacts on contention and cache coherence.

latency considerations influence architectural tradeoffs, while scalability challenges shape resource provisioning, isolation guarantees, and deterministic QoS across diverse deployment scenarios.

Conclusion

The Neural Prism 3157080190 Apex Node represents a tightly integrated mix of neural compute and high-bandwidth routing, engineered for deterministic, QoS-governed performance across heterogeneous cores. Its architecture emphasizes locality, scheduler-precision, and energy efficiency to minimize memory stalls and accelerate tensor dispatch. An intriguing stat: throughput scales linearly with core specialization, delivering near-ideal efficiency gains. This combination positions the Apex Node as a compelling accelerant for latency-sensitive, multi-tenant DL pipelines while maintaining scalable, cost-aware deployment profiles.