Neural Flow 3202560223 Apex Node

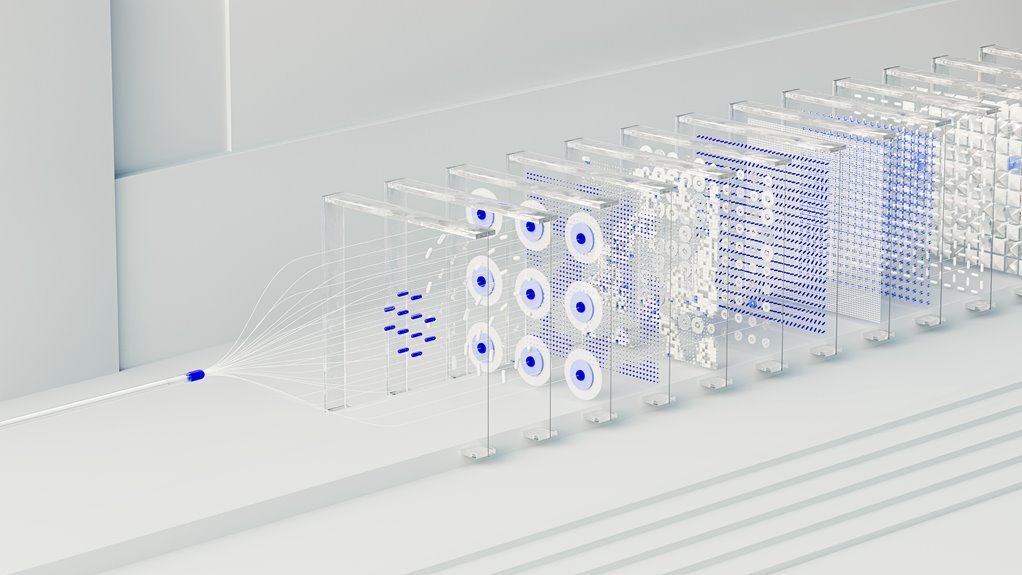

The Neural Flow 3202560223 Apex Node functions as a modular orchestrator for neural pipelines. It aligns input streams with computation steps and scalable resources, clarifying roles across the workflow. The design targets low latency without sacrificing accuracy and emphasizes governance and reproducibility. It supports edge and cloud deployments with observable, scalable processing. Its real impact emerges at the intersection of performance, transparency, and control, inviting further examination of integration approaches and trade-offs.

What Is Neural Flow 3202560223 Apex Node and Why It Matters

Neural Flow 3202560223 Apex Node is a modular component designed to manage and optimize data processing within neural network workflows. It operates as a flexible conduit, aligning input streams with computation steps and scalable resources. The Apex Node clarifies responsibilities, reduces bottlenecks, and enhances reproducibility.

Neural Flow emphasizes transparent governance, while Apex Node ensures efficient, adaptable performance across models and datasets.

Architecture That Lowers Latency Without Sacrificing Accuracy

The architecture surrounding the Neural Flow 3202560223 Apex Node is engineered to reduce latency while preserving model accuracy. It achieves Latency Optimization through streamlined data paths and parallel processing while maintaining robust inference quality.

Design choices acknowledge Accuracy Tradeoffs, selecting methods that minimize latency without compromising essential precision. This balance supports responsive deployments and scalable performance across diverse workloads.

Real-Time Use Cases and Integration Tips for Edge and Cloud

For real-time scenarios, edge deployments prioritize low latency and local decision-making, while cloud-integrated workflows emphasize centralized processing and scalable aggregation. The discussion outlines pragmatic patterns for neural flow deployments, where apex node coordinates data streams, enabling edge cloud collaboration.

Real time requires careful orchestration, deterministic timings, and lightweight models; adoption favors modular integration, observability, and clear data governance to sustain freedom and reliability.

Trade-Offs, Evaluation Criteria, and Scale Considerations

Trade-offs in neural flow deployments arise from balancing latency, accuracy, and resource usage across edge and cloud environments. Evaluation criteria prioritize latency tradeoffs, energy efficiency, and throughput stability, alongside model accuracy and calibration.

Scale considerations address distributed inference, model partitioning, and data locality. Trade-off maturity emerges through empirical benchmarking, reproducible metrics, and transparent governance for deployment, rollback, and safety constraints.

Conclusion

The Apex Node stands as a compass in the data storm, guiding streams with measured patience. Latency and accuracy stride in tandem, like two synchronized lanterns casting a shared glow. Governance sits as the quiet keeper, notebooks open, signaling when to pivot. In edge and cloud, its orchestration becomes the keel—steady, scalable, observable. What begins as a tool ends as a harbor: a symbolic fulcrum where speed meets reliability, and complexity yields to clarity.